# Feedback loops is all you need

Every time I talk to someone about working with LLMs, the conversation ends up in the same place. Not models, not benchmarks, not even tooling. Feedback loops.

Let me tell you how I got there.

Step 1: A prompt and an LLM

At the beginning, there is just you, a prompt, and a model.

You type something, the model answers, you tweak, you try again. It feels magical the first few times. You are a human compiler, hand-assembling prompts until the output stops being embarrassing.

This stage is fine for playing around. It is a terrible place to build anything.

The prompt lives in your head (or in a scratch file somewhere). You cannot tell if a change made things better or worse. You are optimizing by vibes.

Step 2: An agent with customizable prompts

Sooner or later the prompt escapes the chat window.

You pull it into a file. You templatize the variable bits. You start versioning it like code. Maybe you give it a name, a role, a personality — the LLM gets promoted from “oracle” to “agent with a job description”.

This is already a big jump. Now your prompt is an artifact. You can diff it, review it, share it. You can have ten of them, each tuned for a different task.

But you still don’t know if any of them is actually good. This is extremely important. You are advancing, but every change still means tweaking by hand and eyeballing the result.

Step 3: Evals

Here is where most people stop, and where most people should not stop.

An eval is just a way of asking “is this prompt doing what I want, on the kinds of inputs I care about?”. A handful of test cases, a scoring function, a number at the end.

It sounds boring. It is transformative.

The moment you have evals, every change to the prompt has a signal. You stop arguing about whether the new version is “better-ish” and start looking at a score. You discover that the clever phrasing you loved actually tanks performance on half your cases. You discover that a two-line change beats a full rewrite.

Evals turn prompt engineering from vibes-based into something that at least resembles engineering.

Don’t get me wrong — evals are hard to design well. Picking the right test cases, writing a scoring function that actually correlates with what you care about, avoiding overfitting. That is real work. But even a rough eval beats no eval.

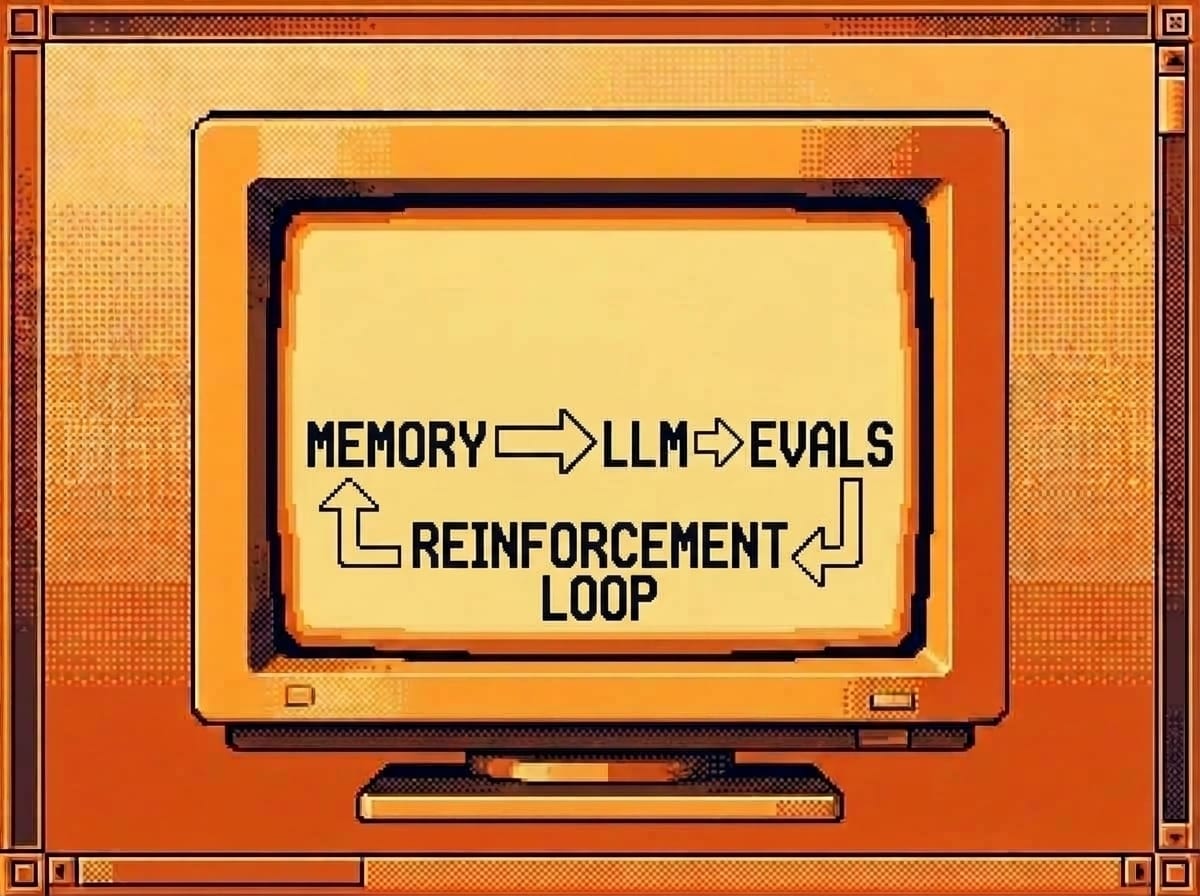

Step 4: The improvement loop

Once you have evals, something sneaky becomes possible.

If a prompt is just text, and evals give you a number, then “improving the prompt” is an optimization problem. And we have a tool (surprise! LLMs!) that is weirdly good at rewriting text given a target — the same LLMs we are building on top of.

So you close the loop. You let a system propose variants of the prompt, run them against the evals, keep what scores higher, and iterate. The prompt improves itself.

This is the whole arc: prompt → customizable prompt → evaluated prompt → self-improving prompt. Each step is a tighter feedback loop than the one before.

Ok, it sounds like science fiction. It isn’t really. The pieces are all boring individually. What makes it work is that each stage nudges the next one a little closer to the goal. Once you have that, it is just a matter of letting it run.

Why this is the whole game

Notice what happened. We never talked about which model to use, or context windows, or fancy agent frameworks. We just kept shortening the distance between “change” and “signal”.

That is the pattern. Feedback loops all the way down.

If your prompts live only in your head, shorten that loop — put them in a file. If they live in a file but you cannot measure them, shorten that loop — write an eval. If you have evals but a human is manually tweaking, shorten that loop — let the system iterate.

The model gets better every few months on its own. Your feedback loops don’t. That is where your leverage is.

Happy hacking!